The benefit is that SIOC now figures out the best latency threshold for a datastore as opposed to using a default/user selection for latency threshold. The latency thresholds for the SIOC can now be automatically set.

#Add iscsi array to vmware esxi 5 install

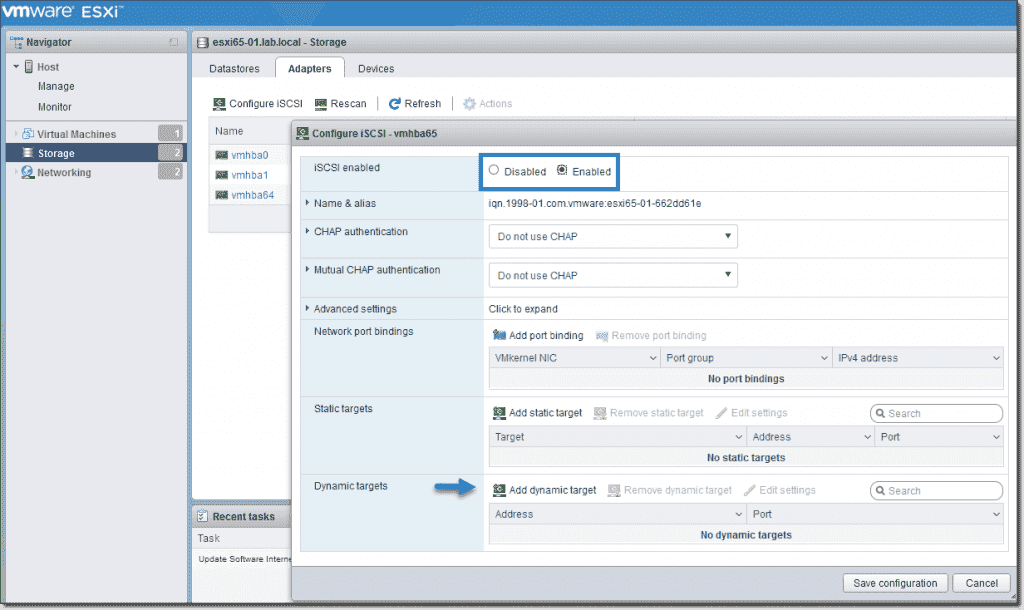

Disk vendors also have the ability to install their own SSD plugins to display vendor specific SSD info. For SSD monitoring, a new smartd module in ESXi 5.1 will be used to provide Wear Leveling and other SMART details for SAS and SATA SSD. This covers Fibre Channel, FCoE, iSCSI, SAS Protocol Statistics and SMART attributes. IODM introduces new esxcli commands to help administrators troubleshoot issues with I/O devices and fabric. Advanced IO Device Management (IODM) & SSD Monitoring vSphere 5.1 introduces support for 16GB FC HBAs running at 16Gb. However the 16Gb HBA had to be set to work at 8GB. ISCSI: We are adding jumbo frame support for all iSCSI adapters in vSphere 5.1, complete with UI support.įibre Channel: VMware introduced support for 16Gb FC HBA with vSphere 5.0.

#Add iscsi array to vmware esxi 5 software

It allows an ESXi 5.1 host to boot from an FCoE LUN using a NIC with special FCoE offload capabilities and VMware’s software FCoE driver.

Storage Protocol EnhancementsįCoE: The Boot from Software FCoE feature is very similar to Boot from Software iSCSI feature which VMware introduced in ESXi 4.1. This was problematic in the past since an APD removed the target as well as the LUN. Another enhancement is introducing PDL for some of those iSCSI arrays which present one LUN per target. When the timer expires, any I/O sent to the device will be immediately ‘fast failed’ meaning that we do not tie up hostd waiting for I/O. This involves timing out I/O on devices that enter into an APD state. In vSphere 5.1, the objective is to handle the next set of APD use cases involving more complex transient APD conditions. With vSphere 5.1, we are extending this to 5 nodes. Historically, VMware only ever supported 2 Node MSCS Clusters. vSphere 5.1 will allow VAAI NAS based snapshots to be used for vCloud Director in addition to being used for VMware View, enabling the use of hardware/native snapshots for linked clones. VSphere 5.0 introduced the offloading of snapshots to the storage array for VMware View via the VAAI NAS primitive ‘Fast File Clone’.

VMware View is the only product that will use the new SE Sparse Disk in vSphere 5.1. SE Sparse disks have a new configurable block allocation size which can be tuned to the recommendations of the storage arrays vendor, or indeed the applications running inside of the Guest OS. The other feature is a dynamic block allocation unit size. SE Sparse Disks introduces an automated mechanism for reclaiming stranded space. The first of these is the ability to reclaim stale or stranded data in the Guest OS filesystem/database.

Space Efficient Sparse Virtual DisksĪ new Space Efficient Sparse Virtual Disk aims to address certain limitations with Virtual Disks. This makes VMFS as scalable as NFS for VDI deployments & vCloud Director deployments which use linked clones. In vSphere 5.1, with the introduction of a new locking mechanism, the number of hosts which can share a read-only file on a VMFS volume has been increased to 32. The primary use case for multiple hosts sharing read-only files is of course linked clones, where linked clones located on separate hosts all shared the same base disk image. In previous versions of vSphere, the maximum number of hosts which could share a read-only file on a VMFS volume was 8. The following is a list of the major storage enhancements introduced with the vSphere 5.1 release.